|

Chris LuI'm currently a researcher at OpenAI. My work focuses on accelerating open-ended systems and automated AI research. My most well-known works are on The AI Scientist, and the PureJaxRL ecosystem. Previously, I was a PhD student at the University of Oxford, where I was advised by Professor Jakob Foerster at FLAIR. My work focused on automating open-ended discovery and multi-agent systems. I previously interned at Sakana.ai and at DeepMind as a research scientist. Before that, I worked as a researcher at Covariant.ai after graduating from UC Berkeley. Google Scholar / Twitter / Github / LinkedIn |

|

|

|

|

|

|

Chris Lu*, Cong Lu*, Robert Tjarko Lange*, Yutaro Yamada*, Shengran Hu, Jakob Foerster, David Ha, Jeff Clune *Equal Contribution Nature 2026 |

|

Akarsh Kumar, Chris Lu, Louis Kirsch, Yujin Tang, Kenneth O. Stanley, Phillip Isola, David Ha Artificial Life 2025 |

|

Eduardo Pignatelli, Jarek Liesen, Robert Tjarko Lange, Chris Lu, Pablo Samuel Castro, Laura Toni NeurIPS 2025 Datasets and Benchmarks Track |

|

Michael Matthews*, Michael Beukman*, Chris Lu, Jakob Foerster *Equal Contribution ICLR 2025 (Oral) |

|

Carlo Alfano, Sebastian Towers, Silvia Sapora, Chris Lu, Patrick Rebeschini ICLR 2025 AutoRL Workshop @ ICML 2024 |

|

Chris Lu*, Samuel Holt*, Claudio Fanconi*, Alex J. Chan, Jakob Foerster†, Mihaela van der Schaar†, Robert Tjarko Lange† *Equal Contribution, †Equal Advising NeurIPS 2024 AutoRL Workshop @ ICML 2024 |

|

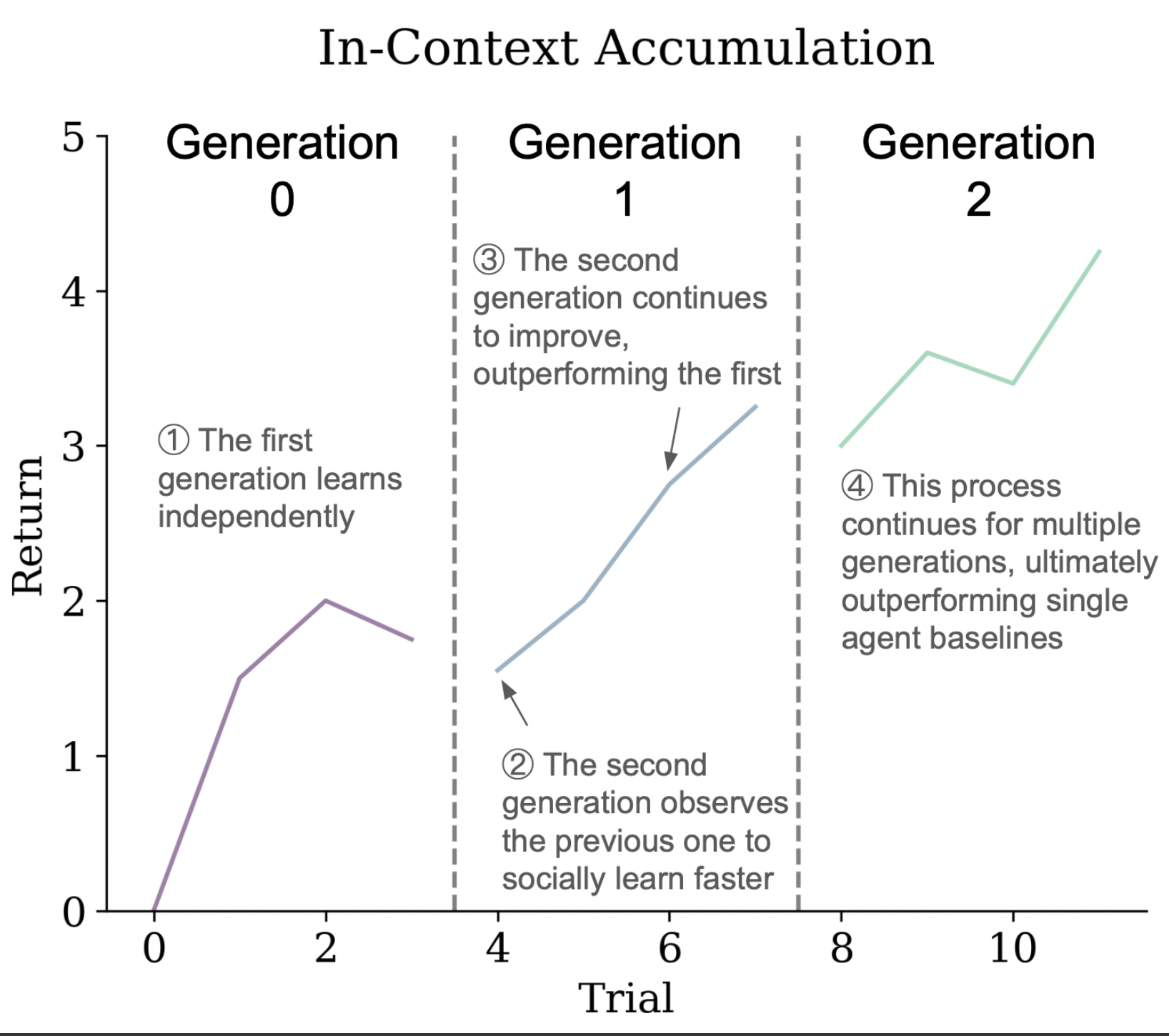

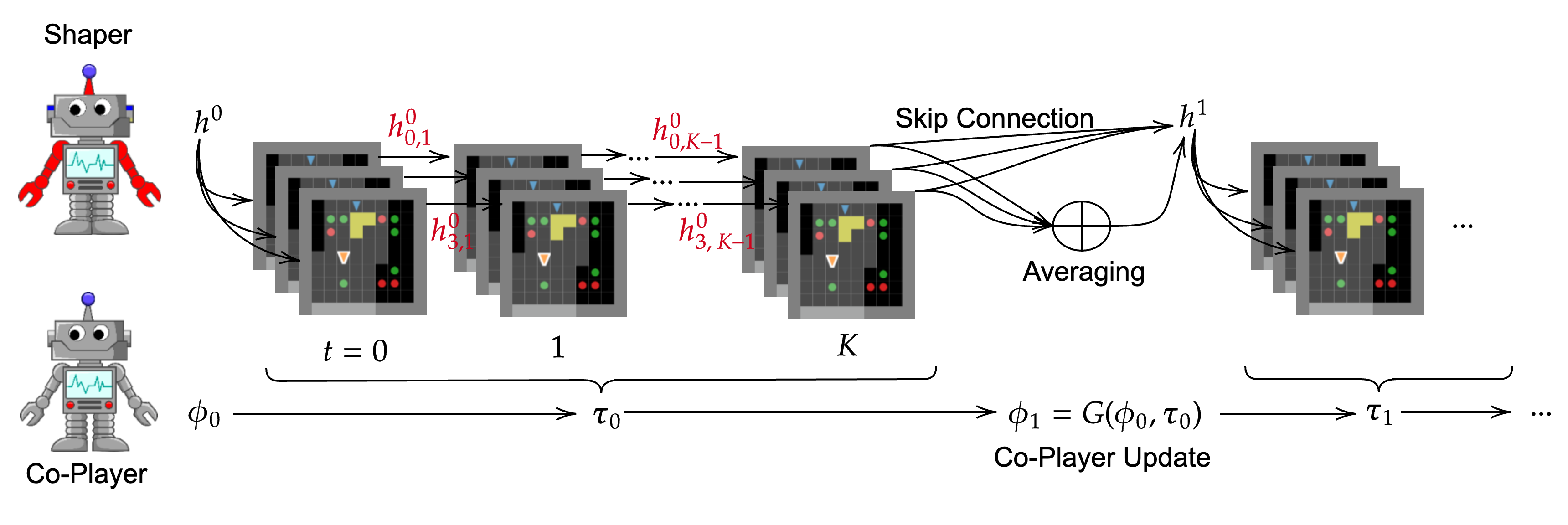

Jonathan Cook*, Chris Lu*, Edward Hughes, Joel Z. Leibo, Jakob Foerster *Equal Contribution NeurIPS 2024 |

|

Alexander Rutherford*†, Benjamin Ellis*†, Matteo Gallici*†, Jonathan Cook†, Andrei Lupu†, Gardar Ingvarsson†, Timon Willi†, Ravi Hammond, Akbir Khan, Christian Schroeder de Witt, Alexandra Souly, Saptarashmi Bandyopadhyay, Mikayel Samvelyan, Minqi Jiang, Robert Tjarko Lange, Shimon Whiteson, Bruno Lacerda, Nick Hawes, Tim Rocktaschel, Chris Lu*†, Jakob Nicolaus Foerster *Equal Contribution, †Core Contribution NeurIPS 2024 Also at the NeurIPS 2023 Workshop on Agent Learning in Open-Endedness |

|

Alexander David Goldie, Chris Lu, Matthew Thomas Jackson, Shimon Whiteson, Jakob Foerster NeurIPS 2024 (Spotlight) AutoRL Workshop @ ICML 2024 (Spotlight) |

|

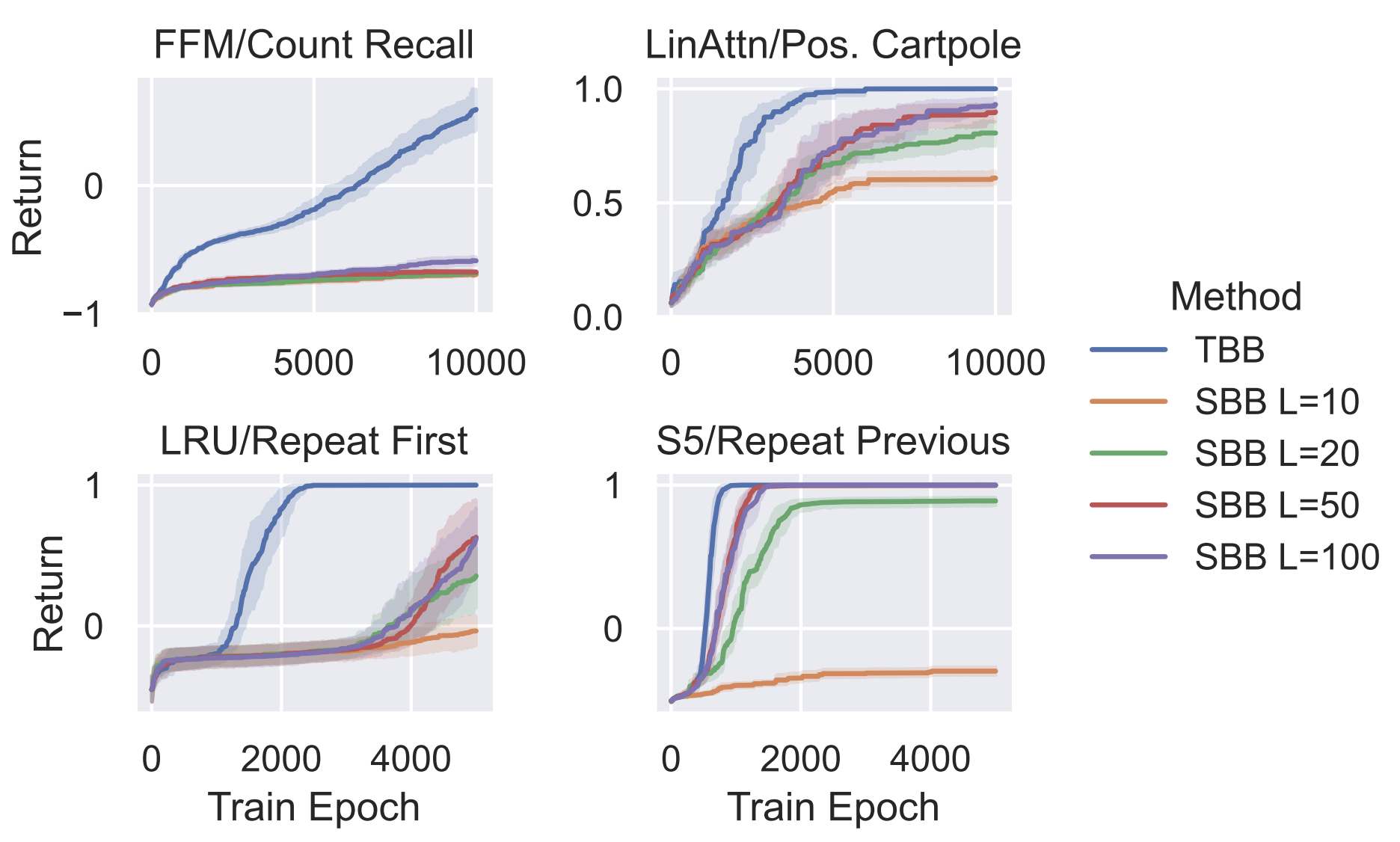

Steven Morad, Chris Lu, Ryan Kortvelesy, Stephan Liwicki, Jakob Foerster, Amanda Prorok NeurIPS 2024 |

|

Chris Lu*, Michael Beukman*, Michael Matthews, Jakob Foerster *Equal Contribution ALIFE 2024 (Oral) |

|

Andrew Jesson, Chris Lu, Gunshi Gupta, Angelos Filos, Jakob Nicolaus Foerster, Yarin Gal ICML 2024 |

|

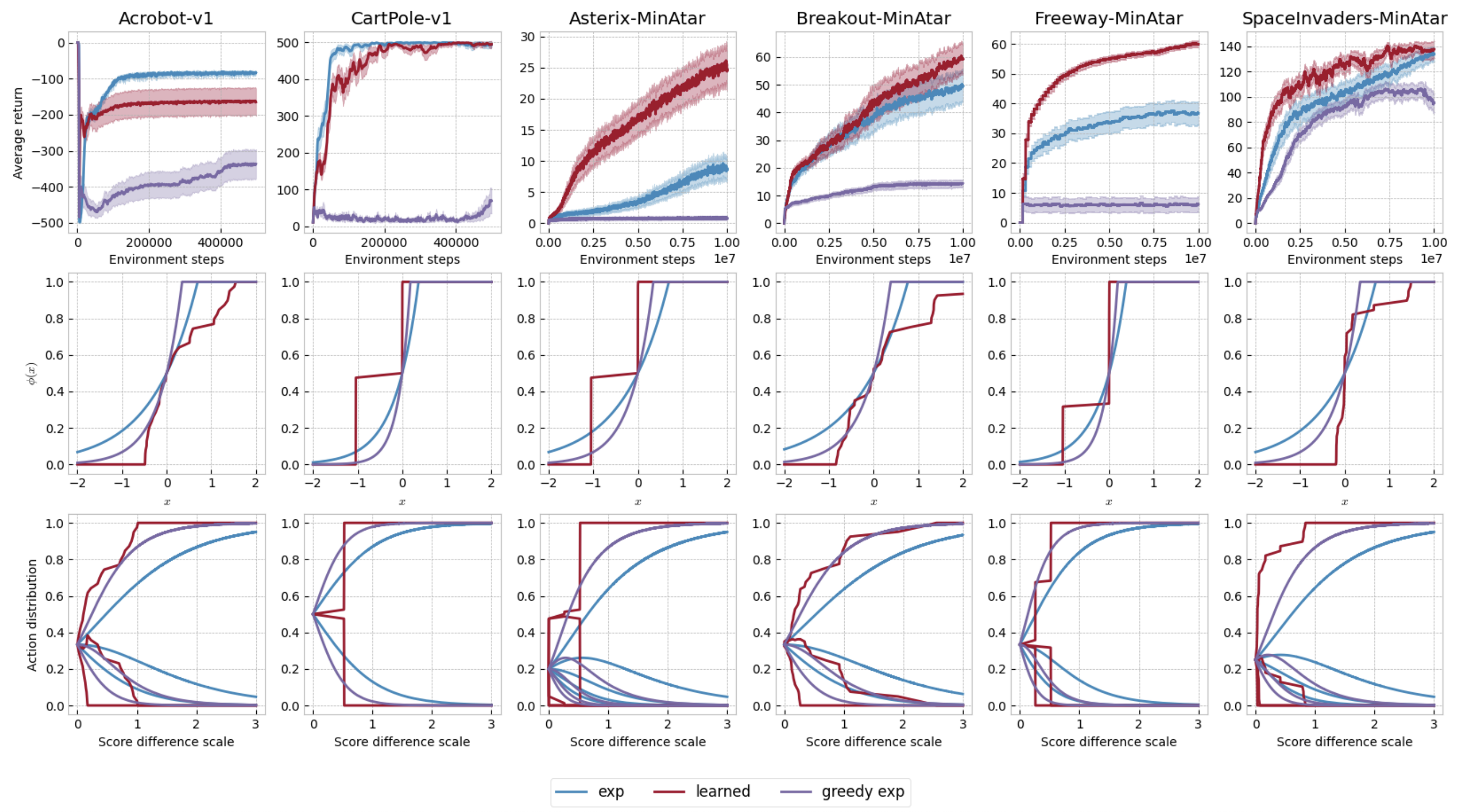

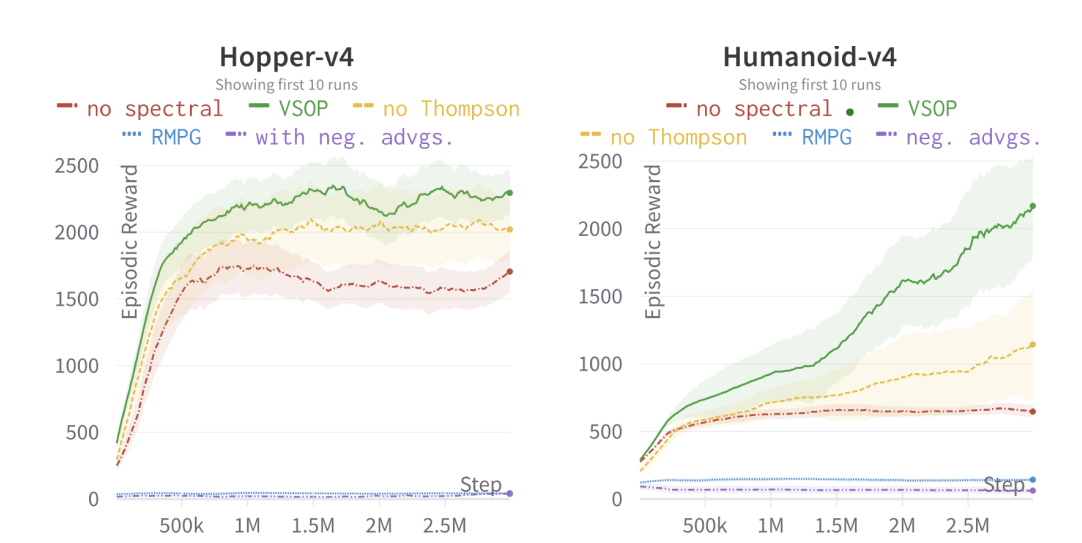

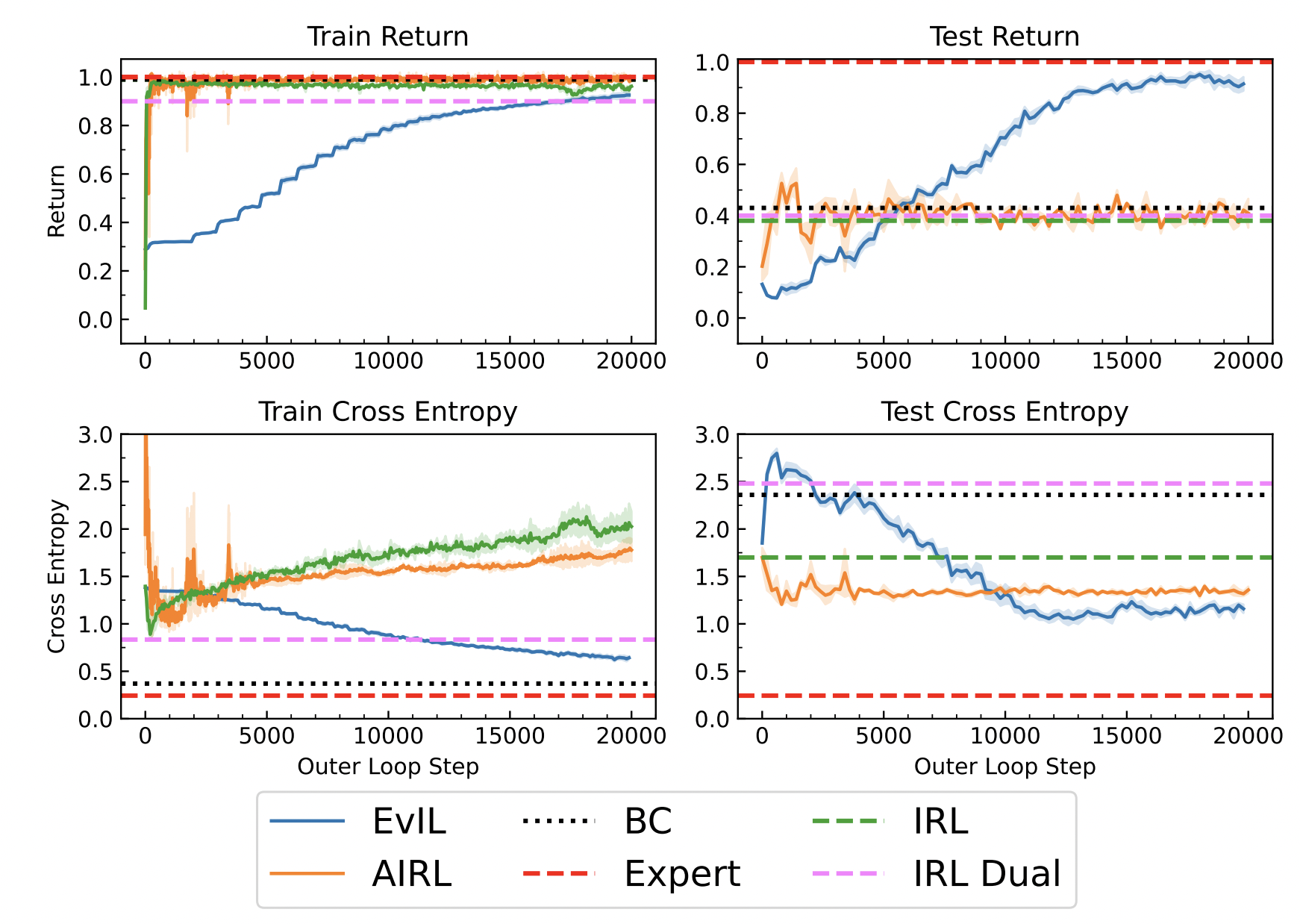

Silvia Sapora, Gokul Swamy, Chris Lu, Yee Whye Teh, Jakob Nicolaus Foerster ICML 2024 Also at the NeurIPS 2023 Robot Learning Workshop |

|

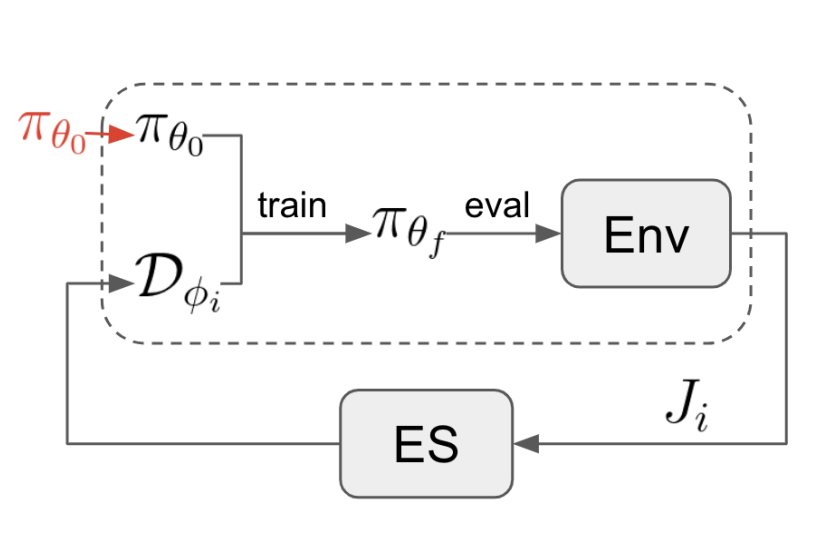

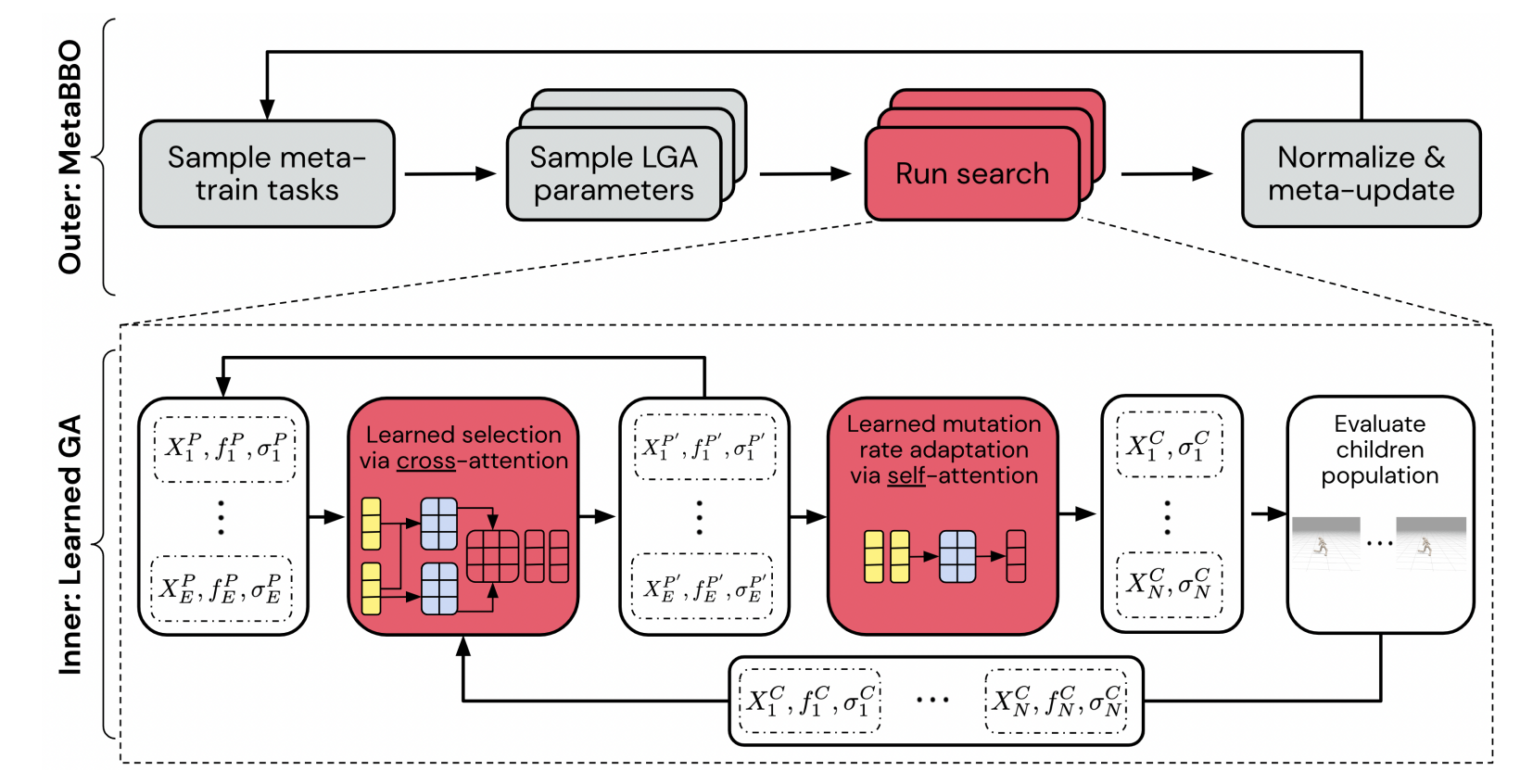

Matthew Jackson*, Chris Lu*, Louis Kirsch, Robert Lange, Shimon Whiteson, Jakob Foerster *Equal Contribution ICLR 2024 Also at the NeurIPS 2023 Workshop on Agent Learning in Open-Endedness |

|

Andrei Lupu, Chris Lu, Jarek Luca Liesen, Robert Tjarko Lange, Jakob Foerster ICLR 2024 |

|

Kitty Fung*, Qizhen Zhang*, Chris Lu, Jia Wan, Timon Willi, Jakob Foerster *Equal Contribution AAMAS 2024 (Oral) Also at the ICML 2023 Workshop on New Frontiers in Learning, Control, and Dynamical Systems |

|

Akbir Khan*, Timon Willi*, Newton Kwan*, Andrea Tachetti, Chris Lu, Edward Grefenstette, Tim Rocktäschel, Jakob Foerster *Equal Contribution AAMAS 2024 (Oral) Also at the Games, Agents, and Incentives Workshop at AAMAS 2023 |

|

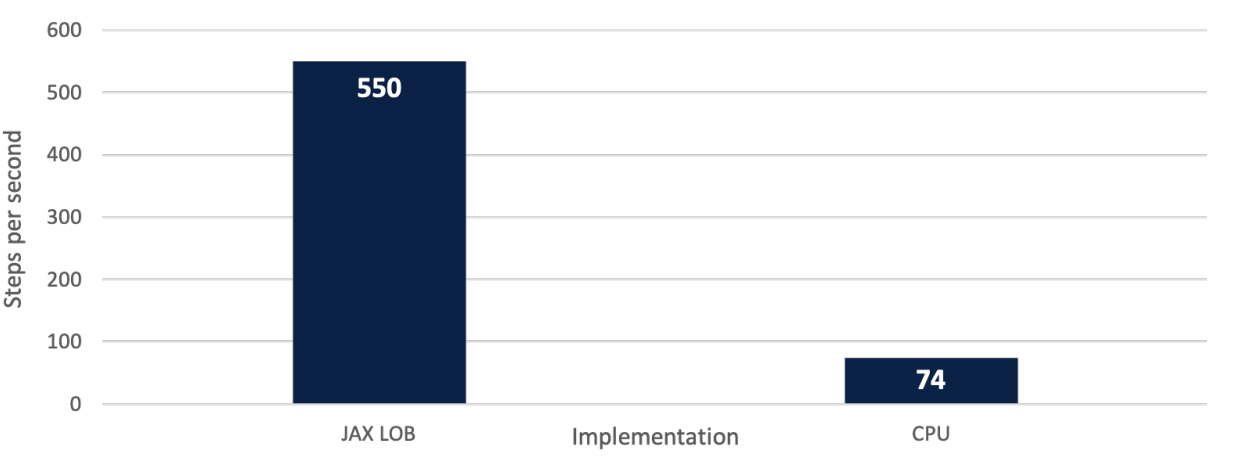

Sascha Frey*, Kang Li*, Peer Nagy*, Silvia Sapora, Chris Lu, Stefan Zohren, Jakob Foerster, Anisoara Calinescu *Equal Contribution International Conference on AI in Finance 2023 (Best Academic Paper Award) |

|

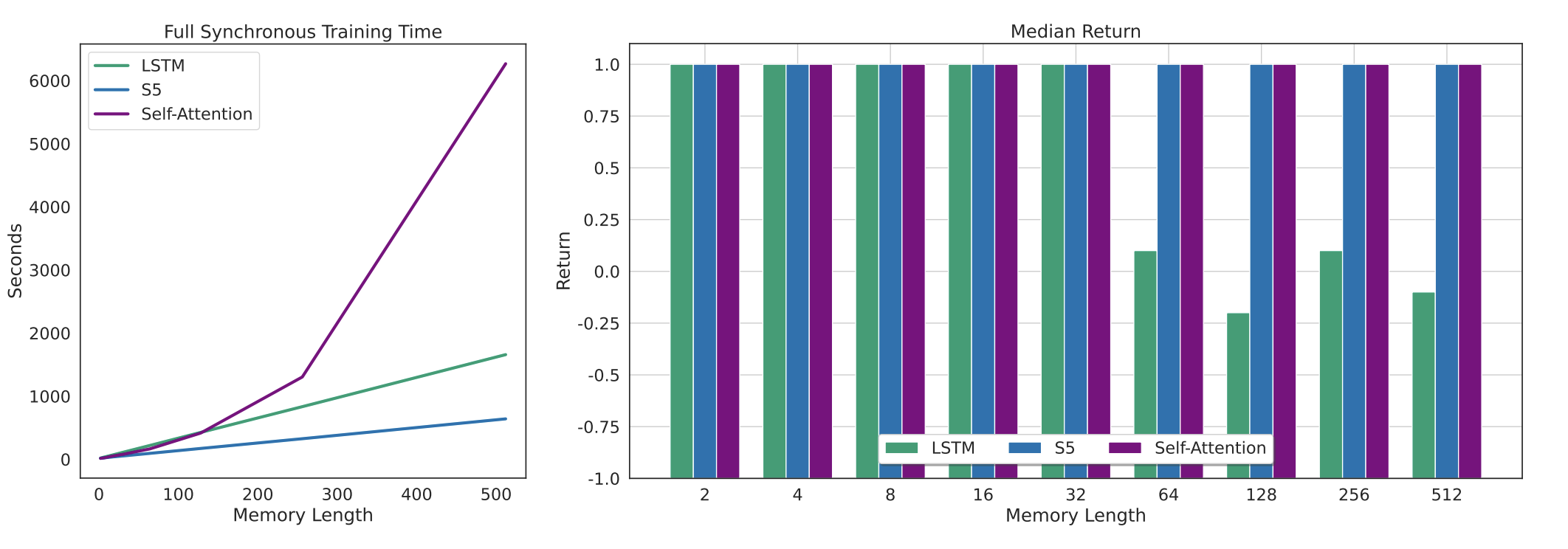

Chris Lu, Yannick Schroecker, Albert Gu, Emilio Parisotto, Jakob Foerster, Satinder Singh, Feryal Behbahani NeurIPS 2023 Also at the Workshop on New Frontiers in Learning, Control, and Dynamical Systems @ ICML 2023 |

|

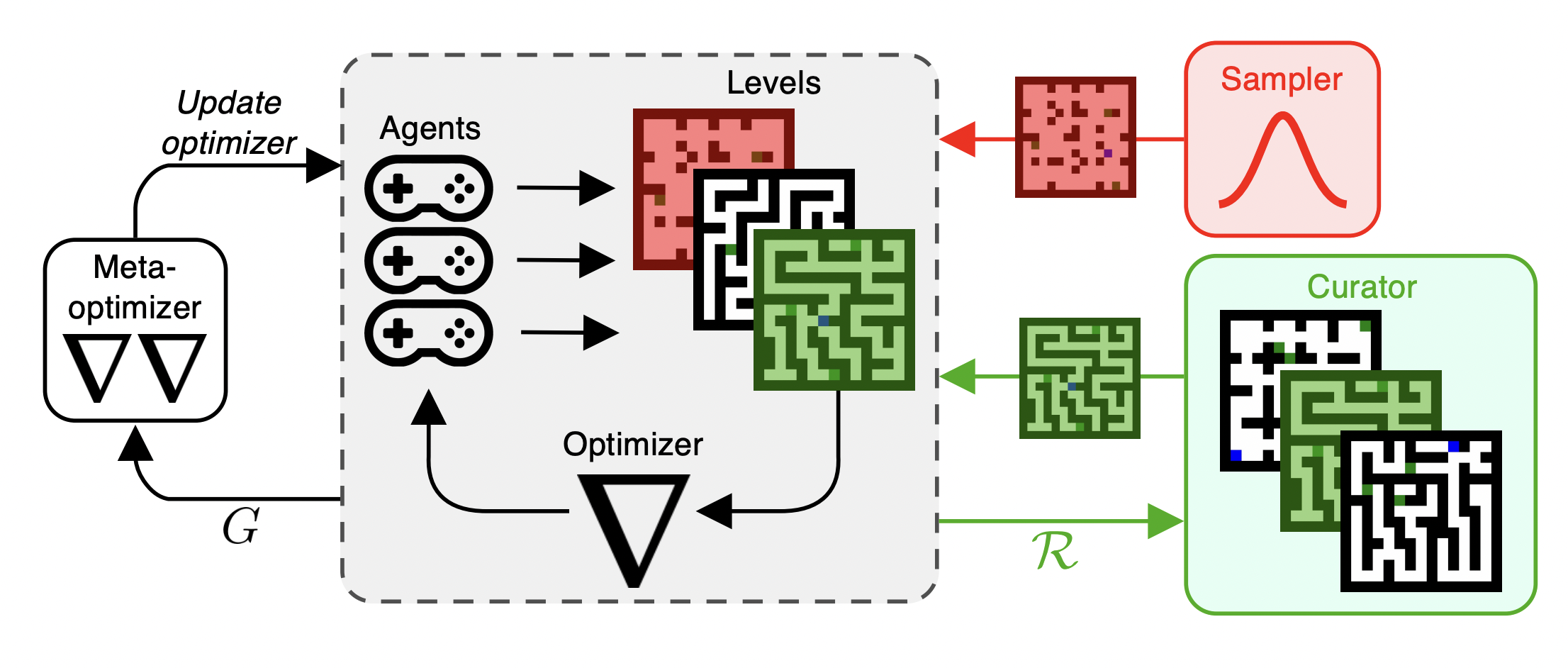

Matthew Thomas Jackson, Minqi Jiang, Jack Parker-Holder, Risto Vuorio, Chris Lu, Gregory Farquhar, Shimon Whiteson, Jakob Nicolaus Foerster NeurIPS 2023 |

|

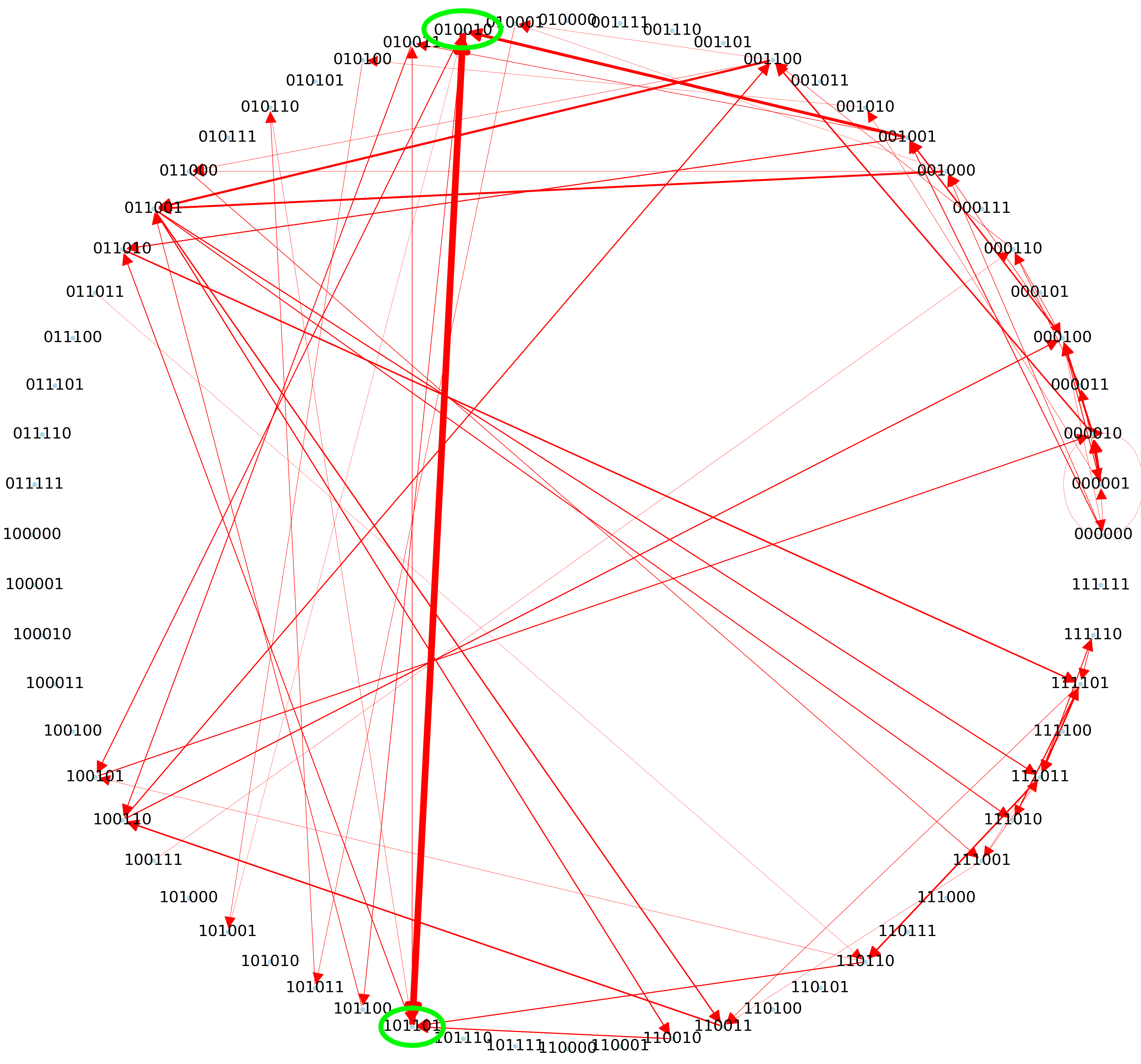

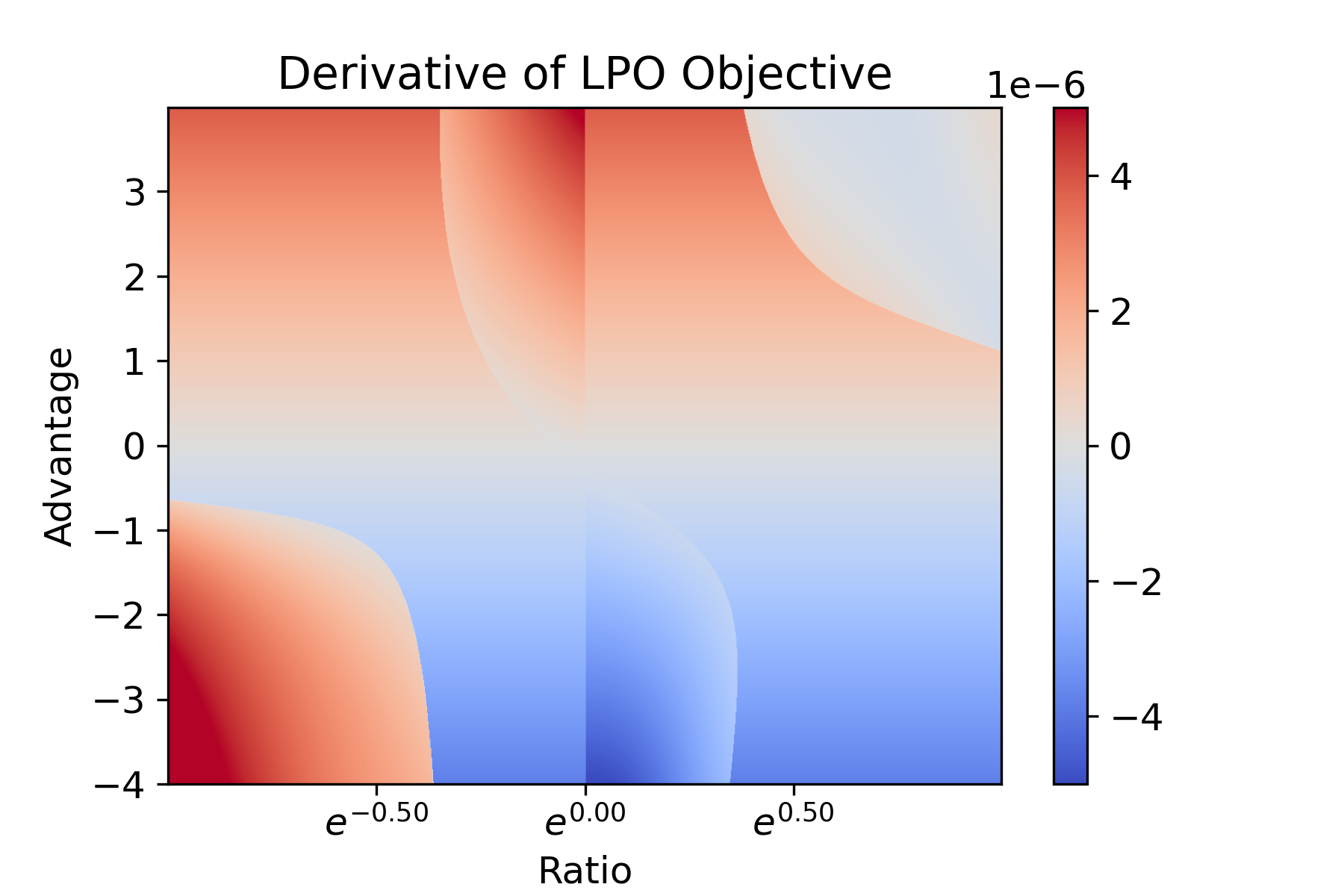

Chris Lu, Timon Willi, Alistair Letcher, Jakob Foerster ICML 2023 Also at the Workshop on Machine Learning for Cybersecurity @ ICML 2022 (Spotlight) |

|

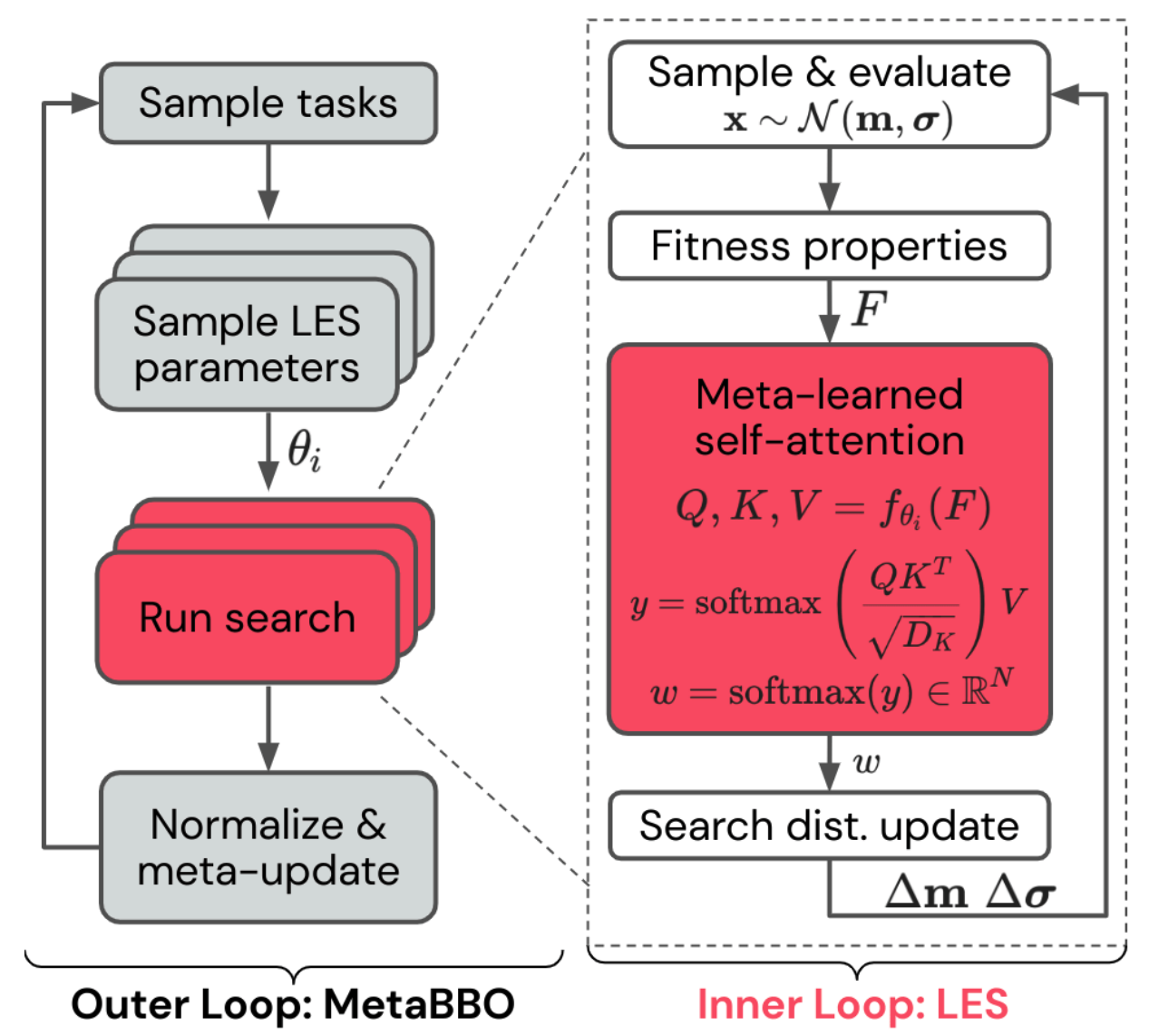

Robert Tjarko Lange, Tom Schaul, Yutian Chen, Chris Lu, Tom Zahavy, Valentin Dallibard, Sebastian Flennerhag GECCO 2023 |

|

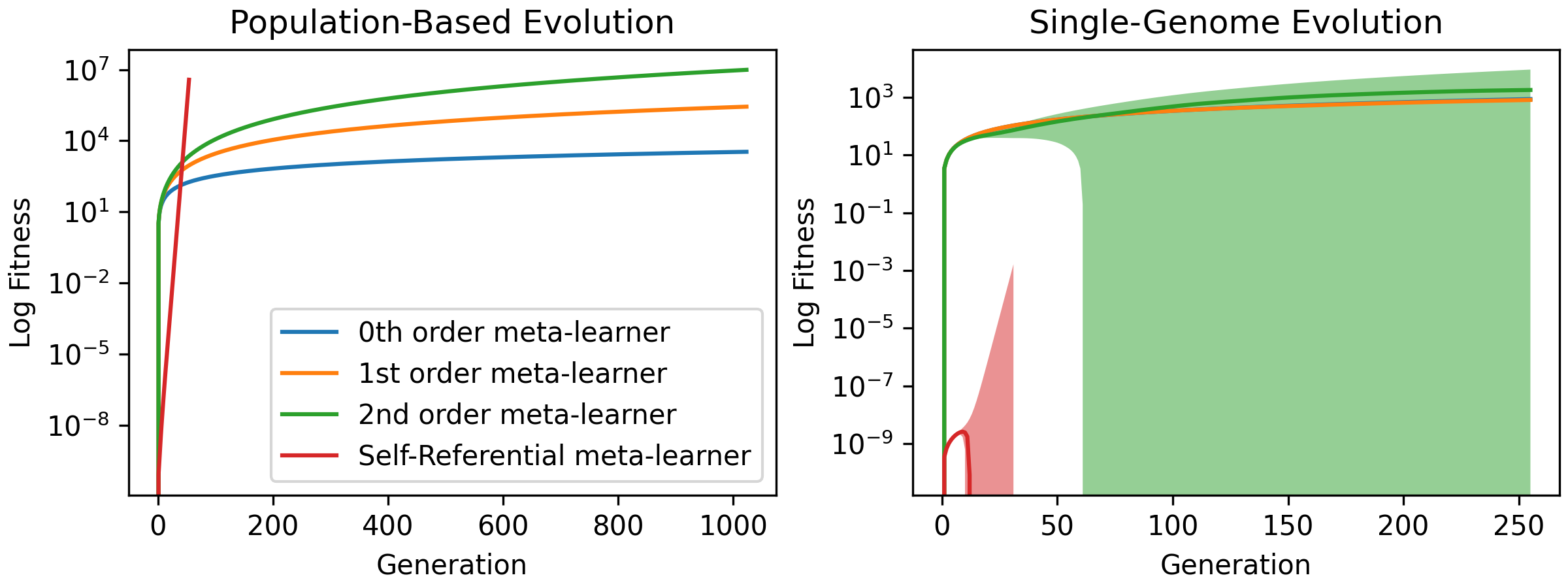

Chris Lu, Sebastian Towers, Jakob Foerster ALIFE 2023 (Oral) |

|

Robert Tjarko Lange, Tom Schaul, Yutian Chen, Tom Zahavy, Valentin Dallibard, Chris Lu, Satinder Singh, Sebastian Flennerhag ICLR 2023 |

|

Chris Lu*, Jakub Grudzien Kuba*, Alistair Letcher, Luke Metz, Christian Schroeder de Witt, Jakob Foerster *Equal Contribution NeurIPS 2022 Also at the Decision Awareness in Reinforcement Learning Workshop @ ICML 2022 (Oral) |

|

Stephen Zhao, Chris Lu, Roger Baker Grosse, Jakob Foerster NeurIPS 2022 |

|

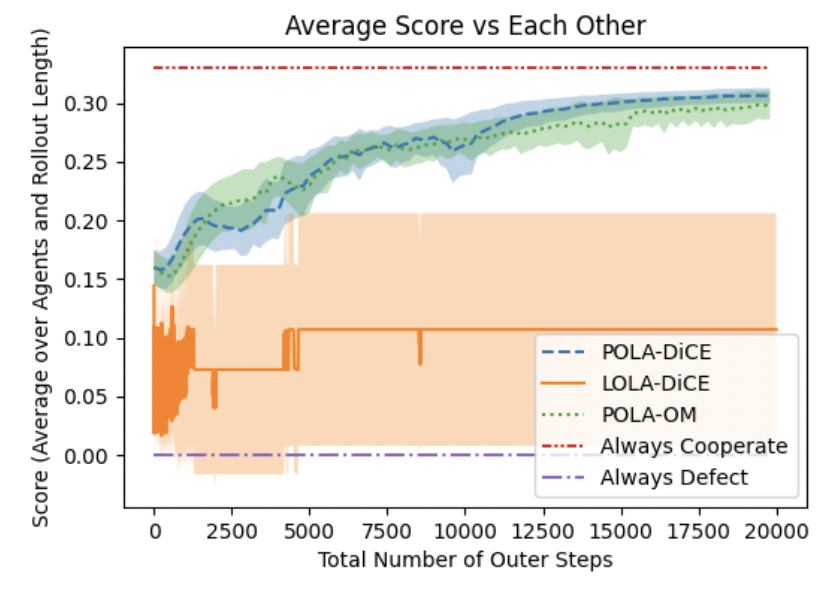

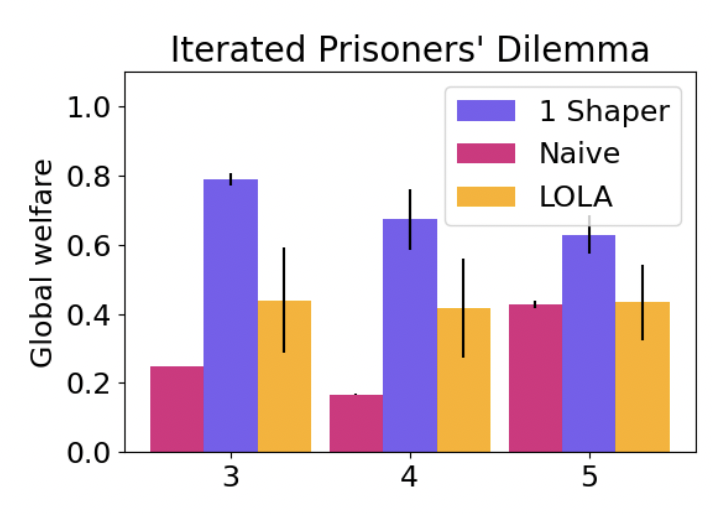

Chris Lu, Timon Willi, Christian Schroeder de Witt, Jakob Foerster ICML 2022 (Spotlight) Also at the ICLR 2022 Workshop on Gamification and Multiagent Solutions (Spotlight) |

|

Qizhen Zhang, Chris Lu, Animesh Garg, Jakob Foerster AAMAS 2022 (Oral Presentation) |

|

Deepak Pathak*, Chris Lu*, Trevor Darrell, Phillip Isola, Alexei A. Efros *Equal Contribution NeurIPS 2019 (Spotlight) Winner of Virtual Creatures Competition (link) |

|

|

|

Alexandra Souly, Timon Willi, Akbir Khan, Robert Kirk, Chris Lu, Edward Grefenstette, Tim Rocktäschel Multi-Agent Security Workshop @ NeurIPS 2023 (Oral) |

|

|

|

Credit for the template to Jon Barron. |